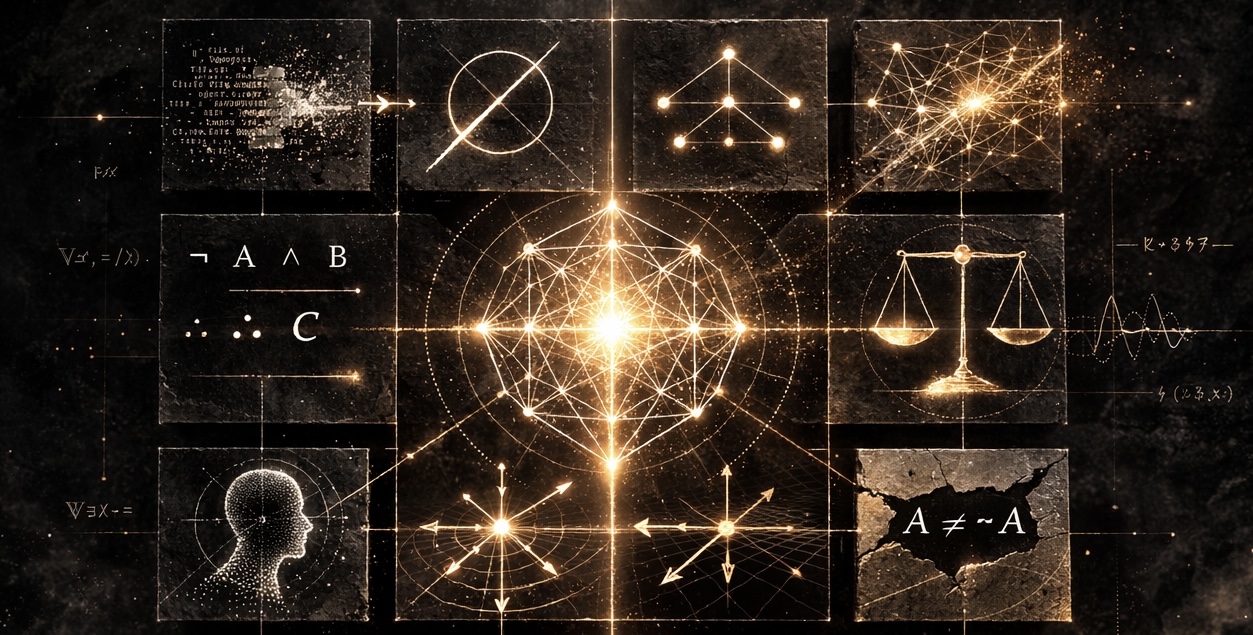

AI Research & Philosophy

Commentary and Analysis on AI Capabilities and Limitations

Latest Articles

Ordinal Systems Architecture: A Control Grammar for Enterprise AI Authority

Ordinal Systems Architecture (OrdSA) is a control-oriented enterprise architecture construct that orders AI capability by ordered authority, abstraction, execution rights, and evidence flow. This paper introduces the construct, its governance principle, its schema-first canonical form, related-work positioning against TOGAF, UAF, ArchiMate, NIST AI RMF, Zero Trust, IAM/PAM, policy-as-code, and DevSecOps, and a worked deployment scenario showing allow, refuse, and escalate paths through the ordinal stack.

Read ArticleThe 4M Model: A Reference Architecture for LLM Harness Engineering

A principled reference architecture organising LLM harness concerns into four modules with separated concerns and explicit coupling channels: Mission, Mind, Morals, and Memory.

Read ArticleAI Sycophancy, Dunning-Kruger, and the Discipline of Falsification

Sycophancy is not only a model-side behavior. It is an interaction pattern. The strongest guardrail is a user who wants truth more than agreement.

Read ArticleThe Token Cliff: Why the AI Vendor Era Is Already Ending

You're not buying AI. You're renting cognition by the syllable. And the meter is always running.

Read ArticleOvertake AI, or It Will Surely Overtake You

The future of knowledge work belongs to people who integrate AI into their expertise. The rest will watch from the sidelines.

Read ArticleThe Frontier Is Closed – And That's a Problem for National Defense

The U.S. Defense Industrial Base has cleared cloud access to frontier AI. But two structural gaps remain that no amount of IL authorization closes.

Read ArticleAll Articles

May 2026

| Date | Article |

|---|---|

| May 21 | Ordinal Systems Architecture: A Control Grammar for Enterprise AI Authority |

April 2026

March 2026

February 2026

###

| Date | Article |

|---|---|

| Two Superpowers, Same Question | |

| Last-Ditch Talks and Trust Demands: The Anthropic Standoff Continues |

Author

James (JD) Longmire

- ORCID: 0009-0009-1383-7698

- Email: jdlongmire@outlook.com

- GitHub: jdlongmire

- Substack: AI Research & Philosophy

AI Assistance Disclosure: This work was developed with assistance from AI language models including Claude (Anthropic), ChatGPT (OpenAI), Gemini (Google), Grok (xAI), and Perplexity. All substantive claims, arguments, and errors remain the author’s responsibility. Human-Curated, AI-Enabled (HCAE).

Archives

- Zenodo Community - Persistent DOI-minted archives

- AIDK Framework (DOI: 10.5281/zenodo.18316059) - Published framework

- GitHub Repository - Source code and development history