You’re not buying AI. You’re renting cognition by the syllable. And the meter is always running.

Every prompt. Every completion. Every summary, draft, extraction, and classification. Each one lands on a spreadsheet somewhere – input tokens, output tokens, price per million – and right now, most organizations aren’t looking at that spreadsheet very carefully. They will be.

The Math Nobody Shows You

Current frontier API pricing runs roughly 3 to 15 dollars per million input tokens and 15 to 60 dollars per million output tokens depending on model tier. That sounds small until you do enterprise arithmetic.

A mid-sized organization running AI across legal review, procurement analysis, customer communications, and internal knowledge retrieval isn’t doing a few thousand queries a day. It’s doing millions. At modest volume – say 500 million tokens monthly across use cases – you’re looking at 15,000 to 75,000 dollars per month at mid-tier pricing. Per year: 180K to 900K. For one organization. Mid-sized.

Scale that to a Fortune 500 with aggressive AI deployment and you’re in the tens of millions annually, paid to a vendor whose pricing you don’t control, on infrastructure you don’t own, for outputs you can’t fully audit.

That’s not a technology purchase. That’s a dependency.

The Trap

The on-ramp was cheap by design. Free tiers, generous developer credits, frictionless API access. The AI vendors needed adoption and they got it. Developers built on the APIs. Products launched. Workflows hardened. Data pipelines got wired in. The switching cost quietly accumulated.

This is a classic platform playbook and it works. Build the ecosystem, then price the ecosystem. The vendors aren’t villains – they’re rational actors. But organizations that walked in treating API access as a commodity utility are waking up to find they’ve been running a tab.

Lock-in here isn’t just contractual. It’s architectural. When your workflows assume a specific model’s output format, your prompts are tuned to one vendor’s quirks, your downstream systems are calibrated to a particular failure mode – extraction is a project, not a toggle.

The Consultants

The Big 4 advisory practices and boutique AI shops selling transformation engagements are not showing you this math. They’re showing you capability benchmarks. MMLU scores. Reasoning evaluations. Context window sizes. They’re showing you what the model can do, not what it will cost to do it at scale.

This isn’t incompetence. It’s incentives. The firm that wins the 12-week AI roadmap engagement isn’t on the hook when the CFO asks why AI infrastructure costs tripled in year two. So the math doesn’t get shown. The pilot looks good. The ROI deck is optimistic. And the dependency deepens before anyone runs the numbers on what “fully deployed” actually costs.

The Wrong Question

“Which model should we use?” is dominating procurement conversations right now. Wrong frame.

The model is a commodity trend in progress. The open-weight ecosystem has demonstrated clearly that capable models are not the exclusive property of frontier labs. Llama, Mistral, and their derivatives are closing the capability gap on a large percentage of enterprise use cases – the ones that don’t require frontier reasoning, just competent, fast, auditable execution at volume.

The question that matters: who designs the layer above the model? What deployment architecture governs how AI outputs enter your workflows, who validates them, what constraints they operate under, and how your organization retains authority over consequential decisions?

That’s an architecture question. Most organizations aren’t asking it.

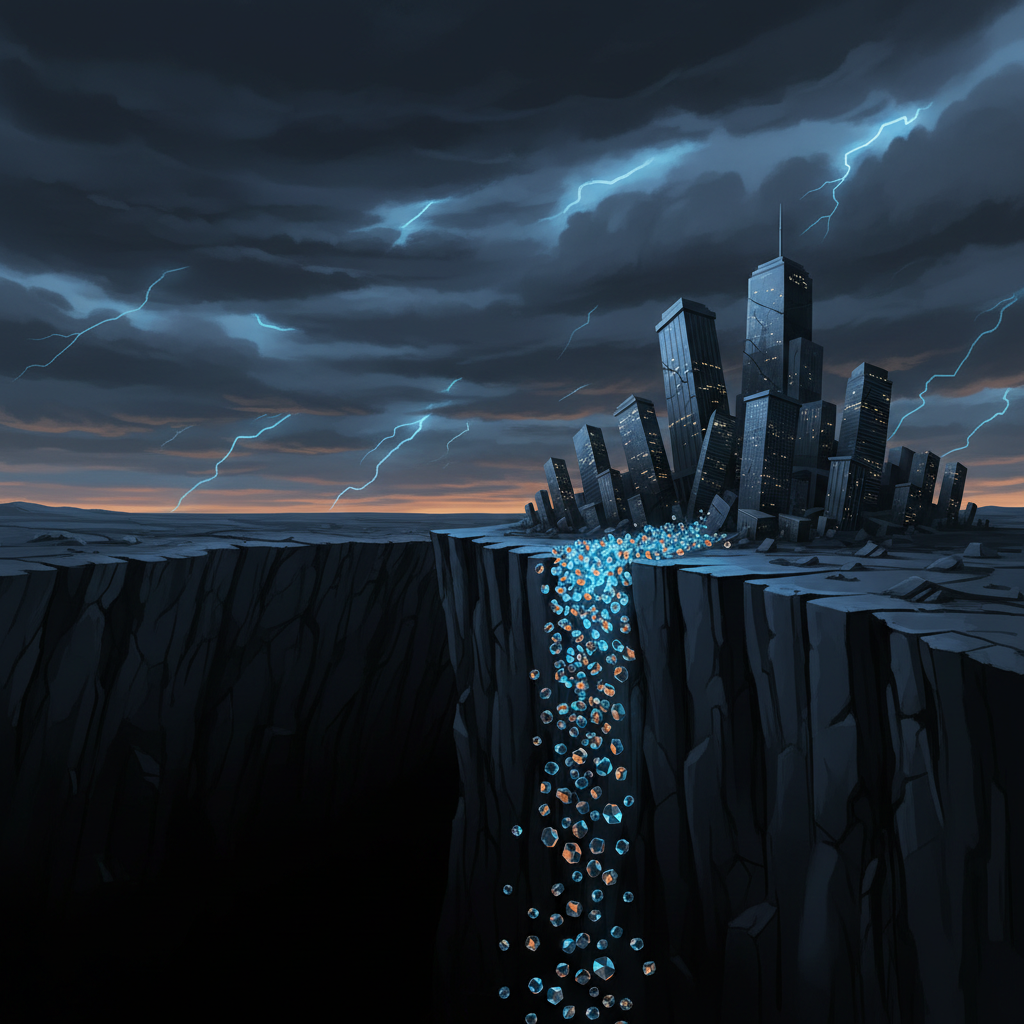

The Cliff

The math flips at a predictable point. It’s not the same for every organization but the variables are consistent: token volume, use case complexity, data sensitivity requirements, and internal infrastructure capacity.

For many mid-to-large enterprises, that point is closer than their current roadmaps assume. When open-weight models at the 70B-parameter range handle 70-80% of your use cases at a fraction of the per-token cost – and when that fraction compounds over millions of monthly queries – the CFO conversation stops being theoretical.

The cliff isn’t a sudden drop. It’s a slope that gets steep faster than the AI enthusiasm cycle accounts for. Organizations that haven’t begun building internal capability for self-hosted or hybrid deployment are going to find themselves redesigning under cost pressure, which is the worst time to make architectural decisions.

The Architect

The organizations that come out ahead in the next five years will have made a different kind of investment. Not in model subscriptions. In ecosystem architecture – the human and technical infrastructure to deploy AI strategically: right model for the task, right governance tier for the stakes, right human authority at the right point in the loop.

You don’t ask which cloud provider is “best.” You architect around your requirements and use the infrastructure accordingly. The same logic is coming for AI. Frontier APIs for genuinely frontier tasks. Self-hosted capable models for high-volume commodity work. Hybrid architectures that route intelligently between them.

The differentiator isn’t the model. It’s the architect.

What disappears: the integrator whose value proposition is “we help you use [Vendor X].” Vendor-specific expertise commoditizes when the vendor becomes interchangeable. What emerges: practitioners who can design and govern AI ecosystems, who understand deployment architecture and how to match capability to use case without vendor dependency.

The enterprises that survive the cost cliff intact already treat AI as infrastructure to be designed, governed, and owned.

The Close

The next competitive moat isn’t AI. Every competitor has API access. Every competitor can run the same models on the same benchmarks.

The moat is architecture. The capacity to design AI-enabled systems that don’t bleed margin at scale, don’t collapse when vendor pricing shifts, and don’t require the organization to trust outputs it has no capacity to trace back to evidence.

The token cliff is coming. The organizations that see it early and build accordingly won’t be scrambling when it arrives.

Comments

Sign in with GitHub to comment, or use the anonymous form below.