When Your AI Vendor Becomes a Supply Chain Risk

On February 27, 2026, President Trump directed all federal agencies to stop using Anthropic’s technology. Defense Secretary Hegseth simultaneously designated Anthropic a “Supply Chain Risk to National Security,” a classification typically used for vendors tied to foreign adversaries, like Huawei, Kaspersky, and ZTE. A six-month phase-out period is in effect. Every military contractor now has to certify it isn’t conducting “commercial activity” with Anthropic. Anthropic has announced it will challenge the designation in court.

The dispute itself is straightforward. Anthropic refused to remove two contractual safeguards: restrictions on mass domestic surveillance and restrictions on fully autonomous weapons without human oversight. The Pentagon wanted unrestricted access under an “all lawful purposes” standard. Anthropic declined. The administration responded with the designation and also threatened to invoke the Defense Production Act to compel access. The legal and political dimensions of this story will play out over months. That’s not what this piece is about.

This piece is about the blast radius.

The Stack Problem

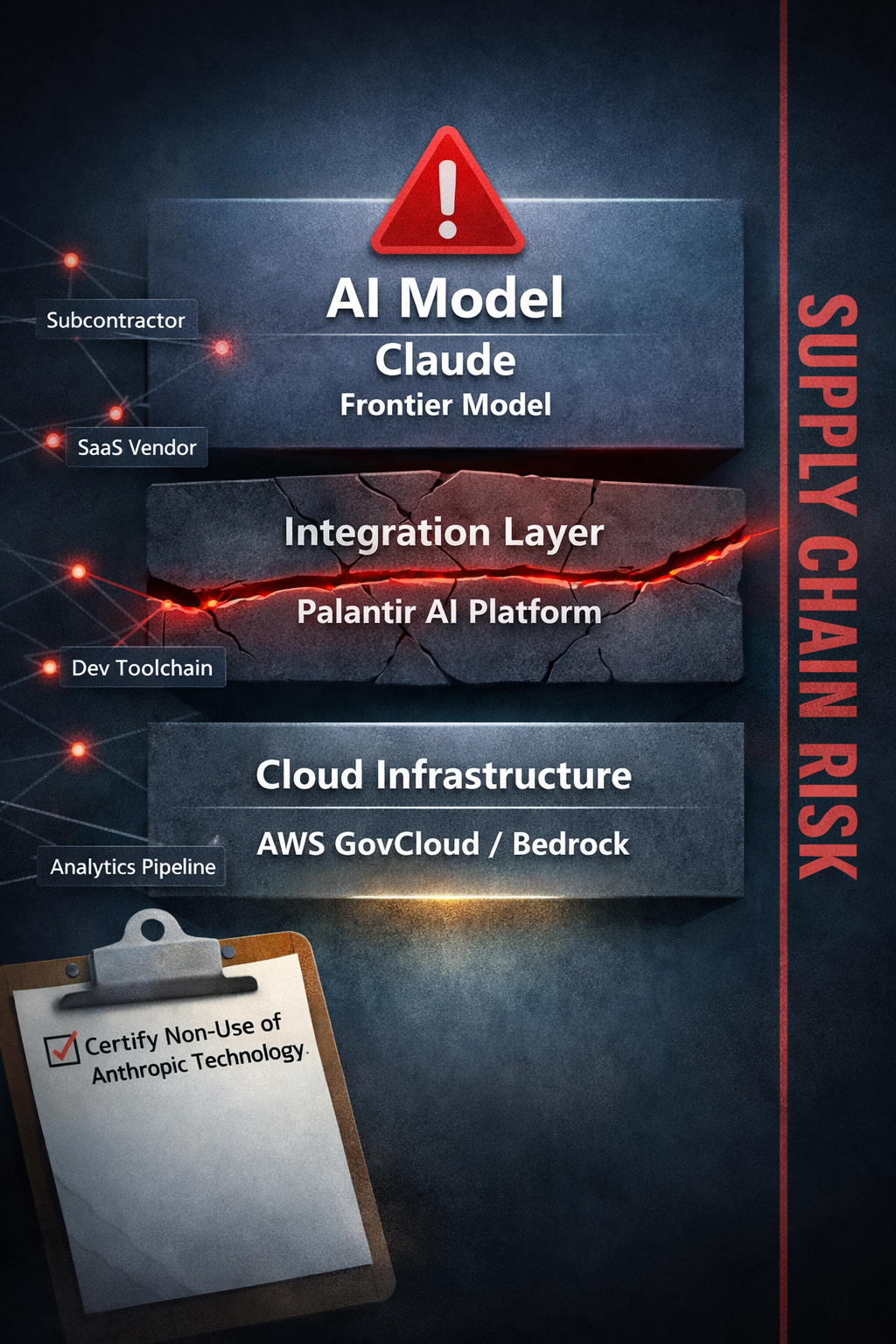

Claude is a key frontier AI model integrated into DoD classified networks, but Claude doesn’t reach those networks alone. It reaches them through a stack, and every layer of that stack now carries risk.

Amazon Web Services provides the cloud infrastructure. Palantir’s AI Platform provides the integration layer that places AI models into classified environments. Claude provides the model. These aren’t loosely coupled services. Amazon holds an $8 billion equity stake in Anthropic. AWS built an $11 billion data center, Project Rainier, exclusively for Anthropic workloads. Anthropic trains its models on Amazon’s proprietary Trainium chips. AWS achieved early FedRAMP High and DoD IL-4/5 authorizations for Claude models via Amazon Bedrock, positioning itself as the primary path for Anthropic into government environments.

The designation says no military contractor may conduct “commercial activity” with Anthropic. Nobody has defined what that means.

Tiers of Exposure

Start with the direct exposure and work outward.

If your organization uses Claude through Amazon Bedrock, through Palantir’s AI Platform, or through Anthropic’s API, you have a Tier 1 problem. The compliance trigger is immediate. You need to certify non-use or begin disentanglement within the phase-out window. This is the straightforward case, and if this is the only exposure you have, count yourself fortunate.

The harder problem is indirect exposure. If your programs operate on AWS GovCloud or use Palantir products, Claude may be embedded in upstream workflows, analytics pipelines, or platform features you didn’t specifically request. You cannot easily prove that some AI-enabled services in your stack don’t touch Claude somewhere in the chain, and current guidance does not clearly define what counts as “commercial activity” for this purpose. The $8 billion financial entanglement between Amazon and Anthropic means a broad interpretation of the designation could implicate AWS services that never directly invoke Claude.

Then there’s the ambient layer. Subcontractors, vendors, development toolchains. Claude Code is widely adopted in enterprise software development. Analytics platforms and SaaS products integrate Claude via API. If your compliance obligation flows down through the contracting chain (and it likely will), you need visibility into every tier of your delivery stack.

The practical problem is that most organizations don’t have this visibility. They know what they bought. They often don’t know what their vendors built it on.

AWS and Palantir Face Their Own Reckonings

AWS and Palantir both face their own versions of this problem, and their responses will shape the landscape for everyone downstream.

AWS has remained publicly silent on the designation. This is not reassuring. Amazon simultaneously announced a $50 billion investment in OpenAI on the same day as the blacklist, which looks like hedging. But the structural question for AWS is deeper than model substitution. Amazon’s investment in Anthropic isn’t a financial side bet; it’s woven into infrastructure. Project Rainier exists to serve Anthropic. Trainium was developed with Anthropic as a key customer. FedRAMP authorizations were obtained specifically for Claude. Unwinding this isn’t a software update. It’s an architectural question about what AWS’s AI services actually are when you remove the Anthropic layer.

Programs running on AWS GovCloud should be asking: which Amazon Bedrock services, SageMaker pipelines, or other AI features invoke Claude or Anthropic-derived models? Can AWS certify, in writing, that a given service does not involve Anthropic technology? What is AWS’s timeline for disentanglement, and what capabilities will change or degrade in the process?

Palantir’s situation is different in kind. Palantir’s AI Platform is the integration layer that placed Claude into classified DoD environments, including the Maven Smart System. Palantir also uses Anthropic models internally. Palantir will be compelled to certify non-use of Anthropic products for military contracts, which means Palantir has its own transition to manage before it can offer clean attestations to its customers.

The good news for Palantir is that its architecture is model-agnostic. The Maven Smart System can swap models. The bad news is that defense industry estimates put the transition at 3 to 12 months for equivalent capability, and the replacement model has to reach classified networks through accredited infrastructure, which brings us to the next question.

The Replacement Ecosystem

A replacement ecosystem is already forming, and it’s worth understanding the shape it’s taking.

Oracle Cloud Infrastructure has DISA IL5 and IL6 authorizations, supports Secret and Top Secret workloads through air-gapped National Security Regions, and just landed an $88 million Air Force contract for Cloud One. Palantir already has a strategic partnership with Oracle; its Gotham and AI Platforms are certified on OCI and deployable across classified regions. xAI’s Grok models are already live on OCI’s Generative AI service in Top Secret environments, and xAI has embraced an “all lawful purposes” posture for defense uses without Anthropic-style carveouts.

The transition path isn’t a simple model swap. The most coherent scenario assembles as a stack swap: from AWS/Palantir/Claude to OCI/Palantir/xAI. Same integration layer, different cloud, different model, different governance posture. No single public announcement confirms this as the decided path, but the partnerships, accreditations, and contractual postures at every layer already exist. If Palantir and defense primes move workloads to OCI to reach Grok in classified regions, AWS’s current centrality to defense AI will come under real pressure.

For businesses connected to this ecosystem, the compliance question isn’t “can we keep using Claude?” It’s “do we need to migrate our cloud infrastructure too?” That’s a fundamentally different conversation with fundamentally different timelines and costs. A model swap might take weeks. A cloud platform migration, with all the accreditation, data residency, and integration work that entails, takes quarters.

The Grounding Axis

I’ve spent the last year building a framework for understanding why AI deployments fail, and this situation illustrates the core problem with unusual clarity.

Most AI analysis focuses on what I call the horizontal axis: infrastructure, scaling, data pipelines. Does the technology work? Can it handle the load? That analysis says everything is fine here. Claude works. The infrastructure hums.

Some analysis reaches the vertical axis: epistemology, reasoning quality. Is the AI good at what it does? That analysis also says things are fine. Defense officials publicly praised Claude’s performance in classified environments.

The problem lives on what I call the grounding axis: purpose, and how the parts of a system relate to the whole. The grounding axis asks whether a system’s built-in purposes match the institution’s constraints. The designation, the DPA threat, the federal ban: none of these were responses to technical failure or poor reasoning. Every one was a response to a mismatch in purpose. Anthropic embedded limits into Claude that conflicted with how the Pentagon wanted to use it.

This distinction matters for businesses because it changes what you’re actually managing. If this were a horizontal-axis problem, you’d fix the infrastructure and move on. If it were a vertical-axis problem, you’d find a smarter model. But a grounding-axis problem means the vendor’s values and your institution’s requirements diverged. The technology was fine. The purposes weren’t aligned. And that kind of risk doesn’t show up in a technical due diligence review.

This Will Happen Again

Here’s why this matters beyond the current crisis: this will happen again.

OpenAI’s Sam Altman publicly stated he shares Anthropic’s red lines on surveillance and autonomous weapons. Over 200 engineers from top AI companies signed a letter supporting Anthropic’s position. Worker groups representing 700,000 employees across Amazon, Google, and Microsoft demanded their employers reject the Pentagon’s terms. The structural tension between commercial AI development culture and unrestricted military use is a permanent feature of this ecosystem.

xAI accepted the terms. The rest of the industry is watching to see what it costs Anthropic to refuse, and what it costs xAI to accept. But the lesson isn’t “never rely on Anthropic” or “just buy from xAI.” It’s that any frontier lab capable of building state-of-the-art models is also capable of asserting its own grounding. The next collision may come from a different direction. The underlying conflict over who sets the purpose of powerful models will remain.

Every defense program that hard-coded a single AI model into its architecture is now learning what model-agnostic design is for. Every program that treated its cloud provider as a neutral utility is now learning what vendor concentration risk looks like. And every business that didn’t ask “what is this vendor’s governance posture, and what happens when it conflicts with our customer’s requirements?” is asking it now.

The organizations that will weather this disruption and the ones that follow are the ones that architected for model portability and vendor independence before they needed it. That’s sound systems engineering independent of this dispute. It’s also the practical implication of taking the grounding axis seriously: build your systems so that when purposes diverge, you can swap the parts without rebuilding the whole.

The Question You Should Be Asking

If your business touches AWS, Palantir, or any vendor in the defense AI ecosystem, the time to audit your exposure is now. Not when the compliance guidance arrives. Not when the court challenge resolves. Now. And while you’re at it, answer the question this crisis is forcing on everyone: have you defined your own red lines for AI use, and do your current vendors share them or conflict with them?

JD Longmire is a Northrop Grumman Fellow and independent researcher in AI philosophy and epistemology. His frameworks for AI Dunning-Kruger (AIDK), the origination-derivation distinction, and Human-Curated AI-Enabled (HCAE) deployment tiers are available through the AI Research & Philosophy community on Zenodo (ORCID: 0009-0009-1383-7698).

Comments

Sign in with GitHub to comment, or use the anonymous form below.