The Retreat from AGI

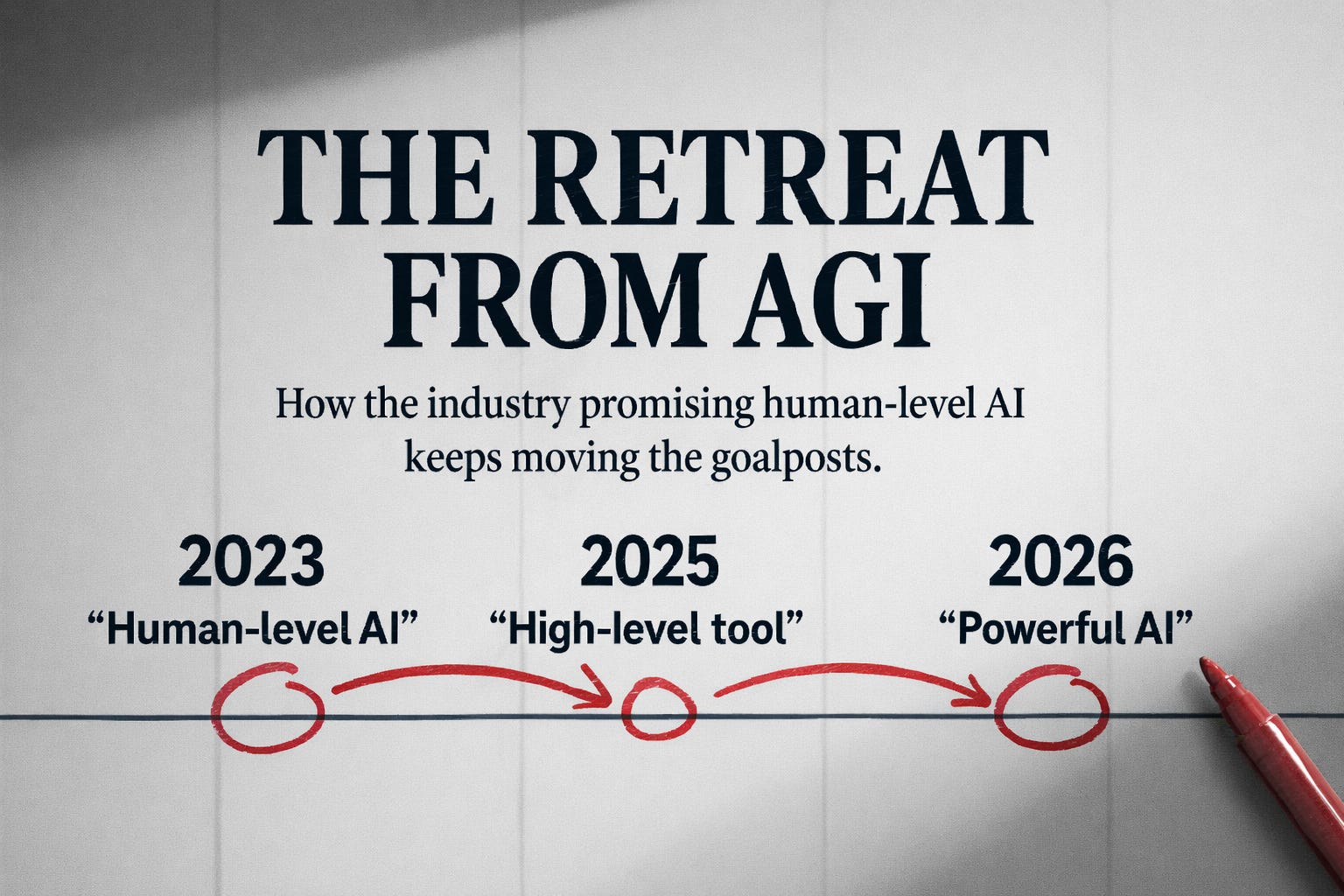

In January 2025, Sam Altman told the world he was “confident” OpenAI knew how to build AGI. Seven months later, after launching GPT-5 to muted applause, he told CNBC that AGI is “not a super useful term.” He has “many” definitions, he said, and none of them quite work.

That’s an extraordinary reversal from the man who raised hundreds of billions of dollars on the promise.

He’s not alone. Dario Amodei rebranded to “powerful AI”—systems matching Nobel laureates across disciplines, arriving late 2026 or early 2027. Elon Musk predicted AGI by 2025, then 2026. The AI 2027 team, who made headlines with their near-term forecast, recently pushed their median estimate to 2030-2035. Google DeepMind’s Shane Legg, who co-founded the company partly to build AGI, now gives it 50/50 odds by 2028 under a deliberately modest definition.

Notice what’s happening. The timelines aren’t just slipping. The thing being predicted keeps changing.

Definitional Retreat as Strategy

This pattern has a name in the philosophy of science. Imre Lakatos called it a degenerating problemshift: when a research programme responds to failed predictions by redefining its terms rather than revising its theory. The hard core stays protected. The goalposts move. And the programme’s defenders declare progress while the record shows accommodation.

I traced this pattern in detail in a recent paper (The AGI Mirage, Zenodo, 2026). The short version: the AGI-optimist programme has executed at least four major definitional shifts since 2020. First, AGI meant human-level intelligence across all cognitive domains. When systems couldn’t pass robust Turing Tests, the benchmark shifted to capability levels. When capability levels proved gameable (models that ace standardized tests but fail simple physical reasoning), the definition contracted to behavioral thresholds. Now Altman floats “doing a significant amount of the work in the world” and Sequoia Capital offers “the ability to figure things out.”

Each redefinition is narrower than the last. Each accommodates what current systems already do (or almost do). None generates a novel prediction that would distinguish genuine general intelligence from sophisticated pattern-matching. That’s the signature of degeneration.

Why the Timeline Keeps Slipping

The standard explanations focus on engineering: scaling laws hit diminishing returns, high-quality training data is exhausted, inference costs are unsustainable. These are real constraints. Gary Marcus, who got 16 of 17 predictions right for 2025, now says flatly that AGI won’t arrive in 2026 or 2027. The vibe has shifted.

But engineering constraints are the horizontal-axis story. They explain why it’s taking longer than expected. They don’t explain why the definition keeps changing.

The grounding-axis story is more revealing. The definition keeps changing because the original concept was never well-specified enough to succeed or fail. “Human-level intelligence across all cognitive domains” sounded precise until you asked what intelligence is, what a domain is, and what “across all” requires. The field never answered these questions. It just started building, on the assumption that sufficient scale would make the questions irrelevant.

Scale didn’t make the questions irrelevant. It made them unavoidable.

What the Retreat Tells Us

Three things become visible once you watch the definitional retreat as a pattern rather than a series of isolated recalibrations.

First, the field has no grounded definition of what it’s trying to build. This isn’t a quibble about terminology. When Altman says he has “many” definitions of AGI and none is “super useful,” he’s admitting that the target was never fixed. You can’t miss a target that was never there. You can only pretend you were aiming somewhere else.

Second, the shift to operational definitions is a retreat from the theoretical to the merely behavioral. And behavioral definitions have a well-known problem in philosophy of mind: they can’t distinguish between a system that understands and a system that behaves as if it understands. Searle made this argument forty-five years ago. The field didn’t refute it. It just stopped engaging with it.

Third, the financial incentives run in exactly one direction. Every major AI company needs the AGI narrative to justify its capital requirements. Anthropic is burning through $20 billion before profitability. OpenAI’s valuation depends on the promise that general intelligence is achievable and close. When the same people who need AGI to be imminent are the ones defining what AGI means, the epistemic situation is compromised. This isn’t a conspiracy claim. It’s an observation about incentive structures.

The Actual Question

None of this means current AI systems are useless. They’re remarkably capable derivative tools. The problem is the conceptual framework, not the technology.

The question was never “when will we get AGI?” That question presupposes a coherent target. The actual question is: what is the categorical difference between what these systems do and what human intelligence does, and can that difference be closed by scaling the same architecture?

I’ve argued elsewhere that the answer is no. Human cognition originates—it accesses novel configurations through causal contact with reality, embodied experience, and the capacity to know that it knows. Current AI systems derive—they transform inputs according to learned statistical patterns with no access to the grounds of their outputs. The distinction is categorical, which means it doesn’t yield to quantitative improvement. More parameters, more data, more inference compute: these extend derivation. They don’t produce origination.

The definitional retreat is what you’d expect if this analysis is correct. If the gap between derivation and origination is categorical, then every attempt to define AGI in terms achievable by derivation-only systems will require narrowing the definition. And that’s precisely what we’re watching.

So What?

If you’re an enterprise leader: stop waiting for AGI and start deploying AI within appropriate epistemic boundaries. The 70-95% enterprise AI failure rates aren’t because the models aren’t good enough. They’re because organizations deploy AI without asking what the system is for and who supplies the judgment it structurally lacks.

If you’re a researcher: the retreat from AGI opens space for better questions. Instead of “how do we scale to general intelligence,” ask “what can derivation-only systems actually achieve, and where precisely does the boundary lie?” That’s a productive research programme. It generates testable predictions instead of receding timelines.

If you’re an investor: watch the definitions, not the demos. When the people building the thing start saying the name for the thing isn’t useful anymore, that’s information. It’s the most expensive “I don’t know” in the history of technology.

Lakatos put it well: it is perfectly rational to play a risky game. What is irrational is to deceive oneself about the risk. The AGI discourse has been deceiving itself for years. The retreat has begun. The question is whether the field will be honest about what it means.

James (JD) Longmire is a Northrop Grumman Fellow conducting independent research in AI philosophy. His work examines the structural limitations of AI systems through the origination-derivation framework. Academic papers on Zenodo. Follow AI Philosophy on Substack.

Developed under the HCAE model. Origination, judgment, and final authority on truth and validity remain with the human author.

Comments

Sign in with GitHub to comment, or use the anonymous form below.