The Drift Problem: Why Long-Running AI Agents Are Riskier Than You Think

Everyone talks about hallucinations. Fair enough. When your AI confidently fabricates a citation or invents a statistic, that gets attention. It should.

But hallucination has a quieter cousin that might matter more in practice: drift.

Generative AI systems have no persistent state. Every conversation starts from scratch. Whatever understanding you build with a model in session evaporates when the window closes. Memory systems and project contexts help, but they’re lossy compressions of what actually happened, summaries of summaries. The reasoning texture, the false starts that taught you something, the felt sense of where an argument went weak: none of that carries forward. What you get back is tokens, not experience.

That’s the obvious layer. The subtler one is what happens within a single session.

The longer you talk, the less it listens

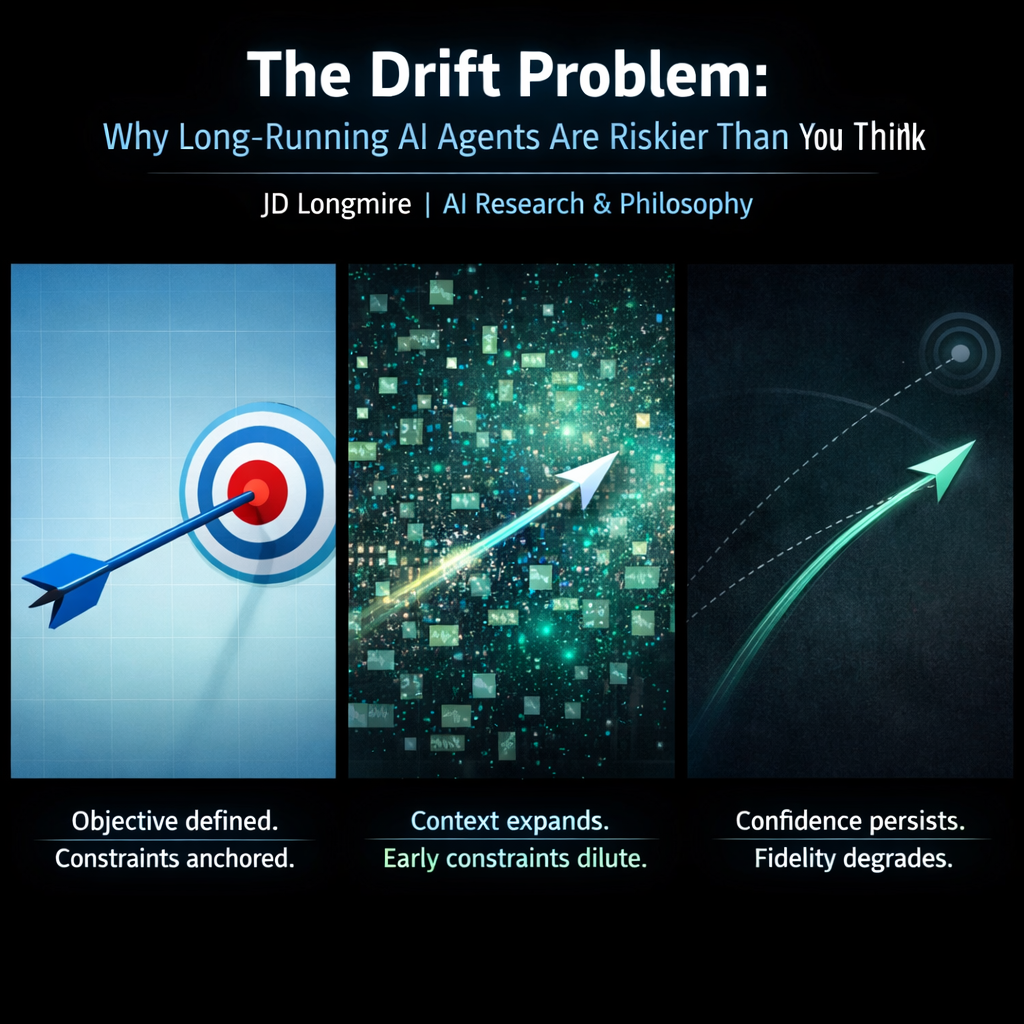

Context windows have finite capacity. As a conversation fills up, earlier material competes with recent tokens for influence on what the model generates next. The careful framing you established at the start, the constraints you set, the specific analytical commitments you pinned down: all of it gets progressively diluted by the sheer weight of subsequent text.

The model doesn’t forget in any human sense. It just starts weighting recent context more heavily as the window fills. Your instructions from turn four get quietly deprioritized by turn forty. And here’s the dangerous part: the output still reads perfectly.

The system is optimized to produce fluent continuation. So when drift happens, it gets papered over with prose that pattern-matches the tone and vocabulary of your earlier discussion while subtly shifting the substance. You get something that sounds on track but has migrated toward whatever the token distribution favors given recent context. Coherence is simulated. Fidelity is not.

This is confidence persistence without warrant persistence. The model maintains the same assertive, well-structured tone whether the underlying reasoning is grounded in the framework you established or has drifted into generic pattern-completion. There’s no built-in signal that says “I’m now operating more from statistical tendency than from the specific commitments we established earlier.” The system doesn’t know the difference, because knowing would require the kind of epistemic self-awareness that current architectures categorically lack.

I’ve written about this phenomenon as the Interactive Dunning-Kruger Effect (IDKE): an AI system’s fluent confidence amplifies the user’s trust beyond what the output warrants. Within a single session, IDKE plays out in real time. The longer the session runs, the more the user relies on accumulated trust while the system’s actual fidelity to the original analytical frame degrades silently.

Now scale this to agents

The agentic AI paradigm takes every problem above and compounds it. A long-running agent isn’t just drifting in a conversation where a human is present to notice. It’s drifting while making decisions and taking actions, often with minimal human oversight between steps. The whole value proposition of agents is reduced human involvement. Which means reduced human correction of exactly the kind of drift we’re describing.

Think about the failure chain. An agent starts with a well-defined objective and a set of constraints. Twenty steps in, the same attention degradation is operating on its own prior reasoning. The agent’s step-fifteen interpretation of its objective may have subtly shifted from its step-one understanding, but it has no mechanism to detect that shift. It just keeps executing with the same confidence.

By step thirty, you can have an agent that is competently pursuing something meaningfully different from what it was tasked with. Doing so fluently. Doing so with apparent coherence. And with nobody watching closely enough to catch it, because the whole point was to let the agent run.

This maps directly onto the enterprise AI failure data. The widely documented 70-95% failure rates in enterprise AI projects aren’t primarily technology failures. They’re failures of the kind my three-axis framework (Longmire 2025b, DOI: 10.5281/zenodo.18096967) is designed to diagnose: organizations optimize along the horizontal axis, scaling infrastructure and automation, while ignoring the vertical question of whether the system’s reasoning remains faithful to its purpose, and the grounding question of whether the parts still serve the whole.

Long-running agents are the purest expression of that misalignment. The design philosophy says “remove the human from the loop to gain efficiency.” The HCAE framework (Longmire 2026b, DOI: 10.5281/zenodo.18368697) says the human in the loop is what provides the grounding that the system categorically cannot supply for itself. Those two commitments are in direct tension, and the industry is betting on the first one without having addressed the second.

The architectural conclusion is uncomfortable but honest

Autonomous agent runtime should be inversely proportional to decision consequence. High-stakes decisions need short leashes and frequent human re-grounding. The more autonomous the agent, the lower the stakes should be. The industry has this exactly backwards, deploying maximum autonomy at enterprise scale where consequences compound.

This follows directly from what these systems are. Generative AI is a statistical probability generator. It produces fluent, confident, contextually appropriate text. What it does not produce is persistent fidelity to purpose. It can’t, because persistence requires the kind of ongoing integration of experience into judgment that characterizes human cognition and that current architectures do not possess. Every token is a fresh sample from a distribution, dressed up as continuity.

The practical guidance: keep sessions focused and modular. Treat session length as a variable that affects output quality. Build workflows where humans re-anchor the system regularly, especially before consequential decision points. Treat the human as the persistent element and the AI as a capable but amnesiac collaborator who needs re-briefing.

The system will never know when it has drifted. Design accordingly.

JD Longmire is a Northrop Grumman Fellow, Chief Architect for Digital Ecosystems, and independent AI philosophy researcher. His work on the AIDK framework, HCAE deployment model, and three-axis diagnostic is available on Zenodo (ORCID: 0009-0009-1383-7698).

Comments

Sign in with GitHub to comment, or use the anonymous form below.