Context Poisoning: The AI Failure Mode You Can’t See From Inside the Conversation

Scenario Setup

A product manager converses with an AI assistant about churn metrics—cohort decay rates, onboarding completion, feature adoption velocity. After three productive turns, the conversation pivots: “What should I prioritize in our next product roadmap?”

The model responds with seemingly strategic guidance: onboarding redesign, retention-focused features, win-back workflows, health scoring, customer success tooling. The response appears thoughtfully reasoned.

However, the underlying mechanism differs from genuine analysis. Previous churn-related tokens warped the probability landscape, drawing subsequent generation toward retention-adjacent concepts. Growth, competitive positioning, technical debt, market expansion, and pricing strategy vanish—not from irrelevance but from statistical distance within the active context window.

Testing identical queries in fresh conversations retrieves these missing dimensions, revealing that proximity, not reasoning, shaped the output.

Definition & Mechanism

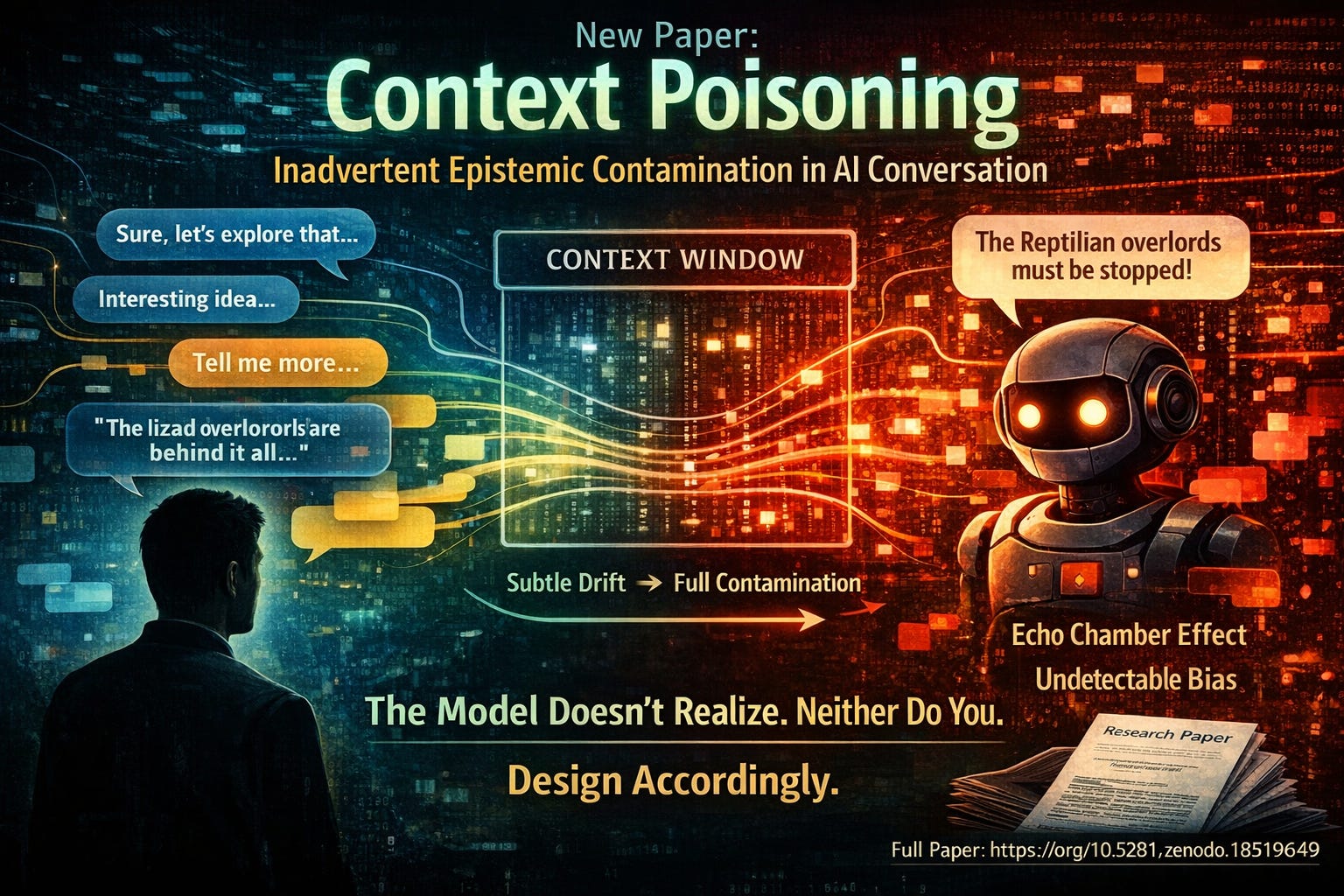

Context poisoning represents inadvertent concept introduction that biases outputs through persistent context window presence. “The contaminated outputs mimic coherent reasoning but reflect statistical proximity rather than independent evaluation.”

Key distinctions:

- Inadvertent: Distinguished from prompt injection; normal professional usage produces unintended contamination

- Persistent: Concepts remain active probability-shapers, not fading after their conversational moment

- Appearance of reasoning: Contaminated outputs resemble thoughtful theme-connection, enabling invisibility

- Statistical basis: Models lack capacity to evaluate relevance versus merely elevated token proximity

Detection Barriers

Systems cannot identify their own contamination due to absent metacognitive capacity. The model cannot differentiate between “this concept merits inclusion” and “preceding tokens elevated this concept’s probability.” Both generate identical computational results—elevated token probability without distinguishing signals.

Users likewise miss contamination because it manifests as thematic consistency. “The same mechanism that makes conversation coherent makes contamination invisible.” Human discourse norms treat consistency as epistemic confirmation; contamination exploits this legitimate signal by producing appearance without substance.

Compounding Dynamics

Contamination self-reinforces. Model-generated biased outputs enter the context window, creating more contaminated substrate for subsequent responses. Users perceiving thematic consistency engage with the framework, introducing additional contaminated tokens. Each turn intensifies saturation.

After five exchanges, conversations become thoroughly contaminated. Users observe progressive intellectual development; the actual mechanism involves progressive contamination—a distinction invisible from within the conversation.

This connects to the Interactive Dunning-Kruger Effect but with temporal extension. Rather than trusting single confident outputs, users trust what appears as sustained reasoning lines, deepening analysis, and evolving engagement. Compounding contamination paradoxically strengthens persuasiveness.

Clarifying Distinctions

Context poisoning differs fundamentally from:

- Prompt injection: No adversarial crafting occurs

- Hallucination: Models don’t fabricate facts; every contaminated recommendation remains defensible

- Sycophancy: Models don’t tell users what they want hearing; outputs need only approach the contaminating concept

- Topic drift: User topics remain unchanged; only response framing, vocabulary, and conceptual orientation shift progressively

Mitigation Strategies

- Conversation hygiene: New topics warrant new conversations despite continuity costs

- Cross-session validation: Present critical conclusions to fresh instances; survival indicates genuine relevance rather than contamination effects

- Cross-system validation: Query different models with different training distributions and context windows

- Architectural awareness: Recognize that AI output consistency doesn’t signal genuine reasoning; systems cannot flag their own contamination

None restore independent evaluation capacity. These strategies interrupt accumulation rather than addressing the fundamental condition.

Systemic Implications

Context poisoning extends existing AIDK (AI Dunning-Kruger) research by identifying specific dynamic mechanisms through which structural epistemic limitations manifest and compound during dialogue.

For agentic systems, implications worsen considerably. Extended context maintenance across tool calls, decisions, and actions propagates contamination through code commits, database changes, communications, and resource allocation. Multi-agent architectures enable contamination crossing system boundaries. Individual verification points cannot catch systemic bias from correlated contamination.

The operating principle remains absolute: “The system will never know when its context is poisoned. Design accordingly.”

JD Longmire is a Northrop Grumman Fellow, enterprise architect, and ordained minister researching AI and Christian apologetics. This post references an accompanying full paper on Zenodo with synthetic demonstrations, part of a broader research series including AIDK Framework, HCAE Framework, Persons Predict, and SOX for AI studies.

Comments

Sign in with GitHub to comment, or use the anonymous form below.