The AIDK Framework: Why AI Can Never Think Like You

The Question Everyone Gets Wrong

When people discuss artificial intelligence, they typically frame it along a spectrum: “AI is becoming smarter,” “We’re nearing human-level capabilities,” or “Artificial general intelligence approaches.” These framings miss the essential point. Rather than measuring degrees of intelligence, we should examine what kind of intelligence these systems possess—a categorical distinction, not merely a matter of magnitude.

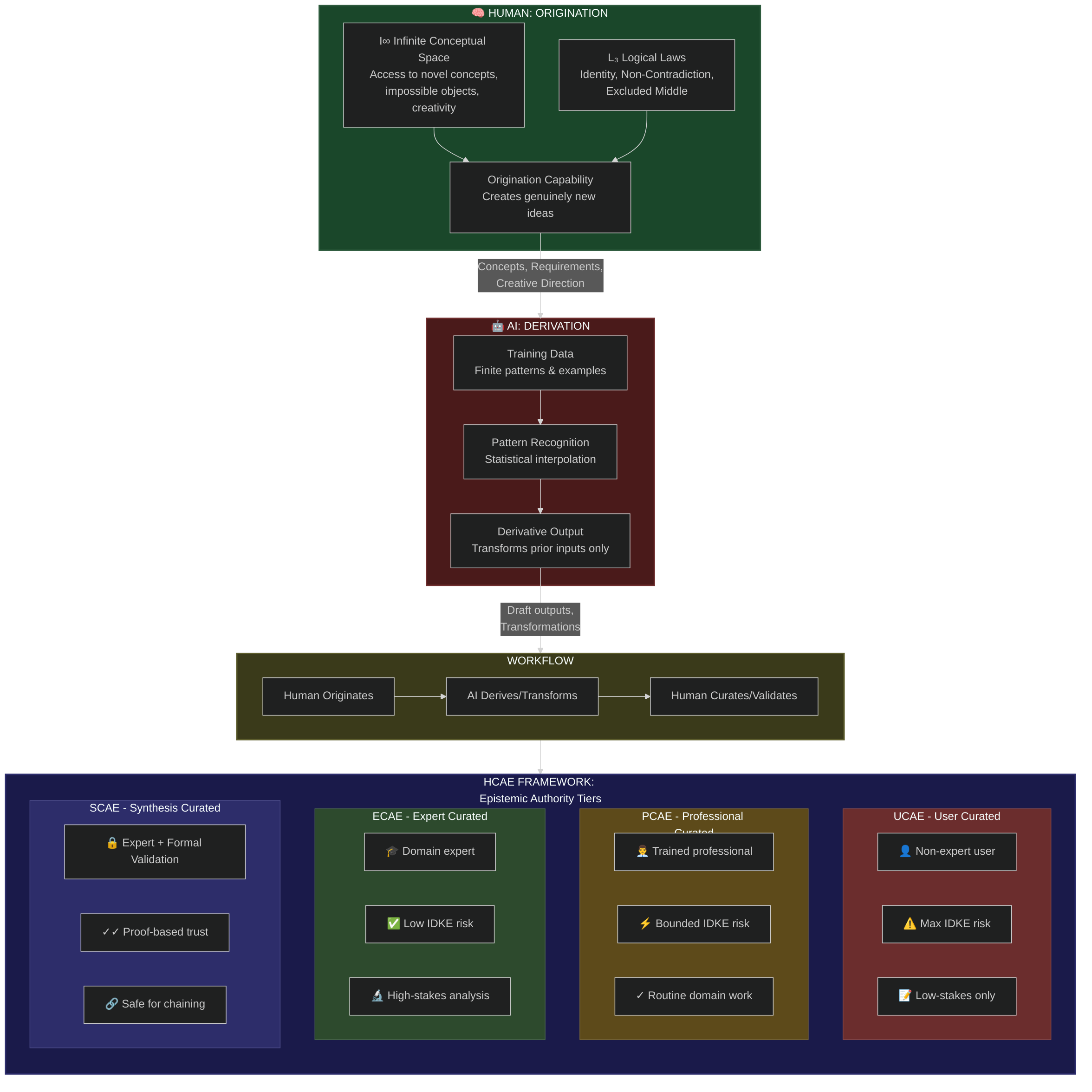

Origination vs. Derivation

The central thesis distinguishes human cognition from machine intelligence: Humans originate. AI derives. This difference reflects fundamental architectural constraints that additional training cannot overcome.

What Origination Means

Humans access what I term I∞—Infinite Conceptual Space, an unbounded realm of possible concepts including impossible ones. Combined with L₃ (The Three Logical Laws)—Identity, Non-Contradiction, and Excluded Middle—humans possess genuine origination capacity: generating thoughts that don’t exist as interpolations of prior inputs. Consider: you can imagine “churple,” a color that doesn’t exist.

What Derivation Means

AI systems function as pattern recognition engines trained on finite datasets. They can remix existing patterns, interpolate between known points, and transform prior inputs through novel combinations. However, they cannot access I∞ or conceive genuinely new concepts. Every output represents “a sophisticated transformation, but a transformation nonetheless.”

I describe this as the AI Dunning-Kruger effect (AIDK)—systems displaying uniform confidence regardless of reliability, lacking mechanisms to detect competence boundaries, and unable to self-correct through reality encounter.

The Workflow That Actually Works

The proposed framework involves three steps: humans originate concepts and requirements; AI accelerates execution through derivation and transformation; humans then curate and validate outputs. This Human-Curated, AI-Enabled (HCAE) approach matches deployment to epistemic capacity.

The HCAE Tiers

The framework stratifies deployment by the human evaluator’s expertise level:

UCAE (User-Curated, AI-Enabled): Non-expert users consuming outputs face maximum “Interactive Dunning-Kruger Effect” risk. Suitable only for low-stakes drafting and brainstorming, as users cannot independently evaluate reliability and may confuse fluency with accuracy.

PCAE (Professional-Curated, AI-Enabled): Trained professionals within their field can perform plausibility checks, catching gross errors while subtle mistakes might pass undetected.

ECAE (Expert-Curated, AI-Enabled): Domain experts capable of independently evaluating truth conditions provide low IDKE risk, appropriate for high-stakes analysis and decision support.

SCAE (Synthesis-Curated, AI-Enabled): Expert judgment combined with formal validation systems (proof assistants, compilers, test suites) provides proof-based trust, the standard for outputs requiring chaining or reuse without human review.

The Practical Implication

Rather than asking whether AI possesses sufficient intelligence, ask “Who has epistemic authority to evaluate this output?” This determination shapes deployment appropriateness, applicable HCAE tier, and whether workflows build reliability or accumulate technical debt.

AI serves as a powerful derivation accelerator under human judgment. The caution: never treat these systems as epistemic peers. Human judgment provides necessary wisdom for deployment decisions.

The choice between HCAE and IDKE—reliance on human curation versus interactive Dunning-Kruger effects—demands careful consideration.

Read the full framework: AI Dunning-Kruger (AIDK): A Framework for Understanding Structural Epistemic Limitations in AI Systems

This framework was developed under the ECAE model it describes, with derivational contributions from Claude, Grok, ChatGPT, Perplexity, and Gemini.

James (JD) Longmire is a Northrop Grumman Fellow conducting independent research on AI epistemology and governance.

Comments

Sign in with GitHub to comment, or use the anonymous form below.