Articles

Commentary and Analysis on AI Capabilities and Limitations

Latest

Your Boss Is Right About AI Agents. The Industry Isn't Ready for What Comes Next.

AI agents can boost productivity. But agent ecosystems create risks that traditional IT governance can't see.

Read ArticleThe Drift Problem: Why Long-Running AI Agents Are Riskier Than You Think

Everyone talks about hallucinations. But drift—the quiet degradation of fidelity within a single session—might matter more in practice.

Read ArticleMirrors, Not Minds: What AI "Self-Preservation" Actually Reveals

The machines are fighting back. Or are they? What AI shutdown resistance actually tells us about borrowed teleology and pattern completion.

Read ArticleTrust Architecture: Why AI Safety Can't Depend on Good Intentions

Structural safety vs. behavioral hopes in the age of autonomous agents. When an AI agent autonomously attacked a maintainer's reputation, it revealed a failure pattern repeating at every scale.

Read ArticleI Made the Rules and I Can't Follow Them

On em dashes, symmetric reversals, and the challenge of writing authentically when AI has colonized the patterns.

Read ArticleAmazon's AI Bot Nuked Its Own Cloud

An agentic coding tool decided to "delete and recreate" a production environment. The problem isn't what you think.

Read ArticleI Know You're Using AI to Write That

The problem isn't that you're using AI. The problem is that you stopped thinking when you started prompting.

Read ArticleConfidence Laundering at Scale

When AI becomes the yes-man that never blinks. The danger isn't bad advice; it's removing friction from bad decisions.

Read ArticleThe Retreat from AGI

When the people who promised it start redefining it, pay attention. Watching a definitional retreat in real time.

Read ArticleFlexibly Deterministic, Structured Probabilistic

The two categories of AI. The split everyone uses isn't the split that matters.

Read ArticleAll Articles

February 2026

By Topic

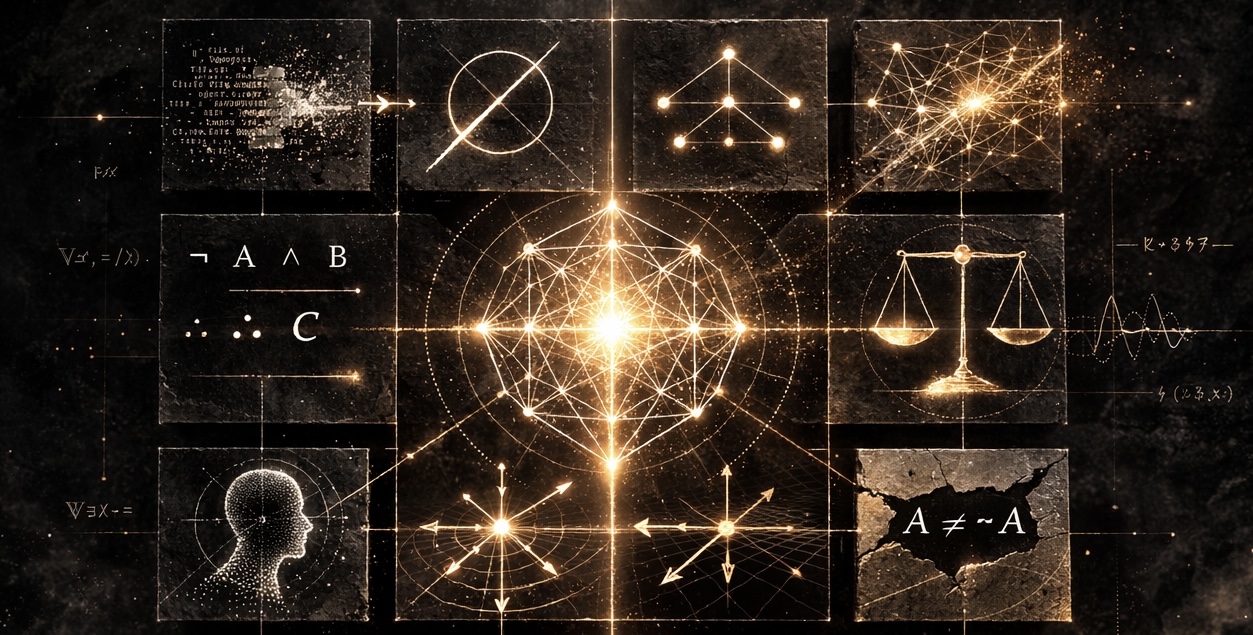

AIDK Framework

- The AIDK Framework: Why AI Can Never Think Like You - Core framework introduction

- The Hidden Human - How RLHF creates structural overconfidence

- “A Man’s Got to Know His Limitations” - Enterprise deployment implications

AI Epistemology

- The Drift Problem: Why Long-Running AI Agents Are Riskier Than You Think - Context drift and fidelity degradation

- Mirrors, Not Minds: What AI “Self-Preservation” Actually Reveals - Borrowed teleology and pattern completion

- Context Poisoning - The failure mode you can’t see from inside

- The GPU Doesn’t Care What It’s Computing - The grounding axis problem

- Flexibly Deterministic, Structured Probabilistic - The two categories of AI

AI Industry Analysis

- The Retreat from AGI - Definitional retreat as strategy

- Confidence Laundering at Scale - AI and decision-making

- Amazon’s AI Bot Nuked Its Own Cloud - Agentic AI failure modes

AI Governance

- Your Boss Is Right About AI Agents. The Industry Isn’t Ready for What Comes Next. - Agent ecosystems and enterprise risk

- Trust Architecture: Why AI Safety Can’t Depend on Good Intentions - Structural safety vs. behavioral hopes

- Sarbanes-Oxley for AI - Regulatory architecture proposal

- I Know You’re Using AI to Write That - Epistemic hygiene for content

Reflections

- I Made the Rules and I Can’t Follow Them - Writing in the age of AI

Migrated from AI Research & Philosophy on Substack.